Tackling Racial Bias in AI: A Closer Look at Meta’s Imagine and Beyond

In the rapidly evolving landscape of artificial intelligence (AI), a recurring challenge has emerged, casting a long shadow over the potential of this transformative technology. The issue at hand is the racial bias ingrained in AI systems, a problem recently brought into the spotlight by Meta’s AI image generator, Imagine. The tool’s struggle to accurately generate images of certain interracial couples, notably failing to depict an Asian man with a Caucasian woman while effortlessly producing images of a white man with an Asian woman, has ignited a broader conversation about racial biases in AI.

The Underlying Bias in AI Training Data

This phenomenon isn’t merely a glitch in Meta’s Imagine but a symptom of a pervasive problem across AI technologies. The discrepancy in accurately generating interracial relationships points to systemic bias within the AI’s training data. Such biases aren’t just technical oversights; they are reflective of deeper societal stereotypes and under-representation perpetuated in the media and online content — the very materials AI learns from.

Exploring the Root Causes

The inconsistencies observed in Meta’s Imagine invite us to scrutinize the data AI models are trained on. These biases can stem from a lack of diversity in the data or the development teams themselves, raising crucial questions about the tech industry’s responsibility in ensuring AI’s inclusivity and fairness. The perpetuation of racial stereotypes and the continued underrepresentation of certain groups in digital representations are pressing concerns that call for immediate action.

The Need for Ethical AI Development

Correcting these biases requires a commitment to ethical AI development, emphasizing fairness, diversity, and inclusivity. It necessitates diversifying the sources of training data and ensuring development teams are as diverse as the populations the technology serves. Transparency regarding AI systems’ limitations and biases, coupled with a dedication to continuous improvement, is vital.

A Moral Imperative for the Tech Industry

The challenges presented by racial bias in AI, exemplified by Meta’s Imagine, underscore the tech industry’s ethical responsibilities. As AI becomes more intertwined with our daily lives, the imperative to address and rectify these biases grows stronger. The goal should be to create AI technologies that accurately reflect human diversity and serve the entirety of society equitably.

The issue with Meta’s Imagine serves as a cautionary tale, urging the tech industry to confront the embedded biases within AI systems. By prioritizing diverse and inclusive data sets and development practices, we can pave the way for AI technologies that truly understand and represent the rich tapestry of human experiences. The path forward involves recognizing these biases, committing to ethical AI development, and continually striving for a future where AI empowers all individuals, free from the constraints of past prejudices.

**Update**

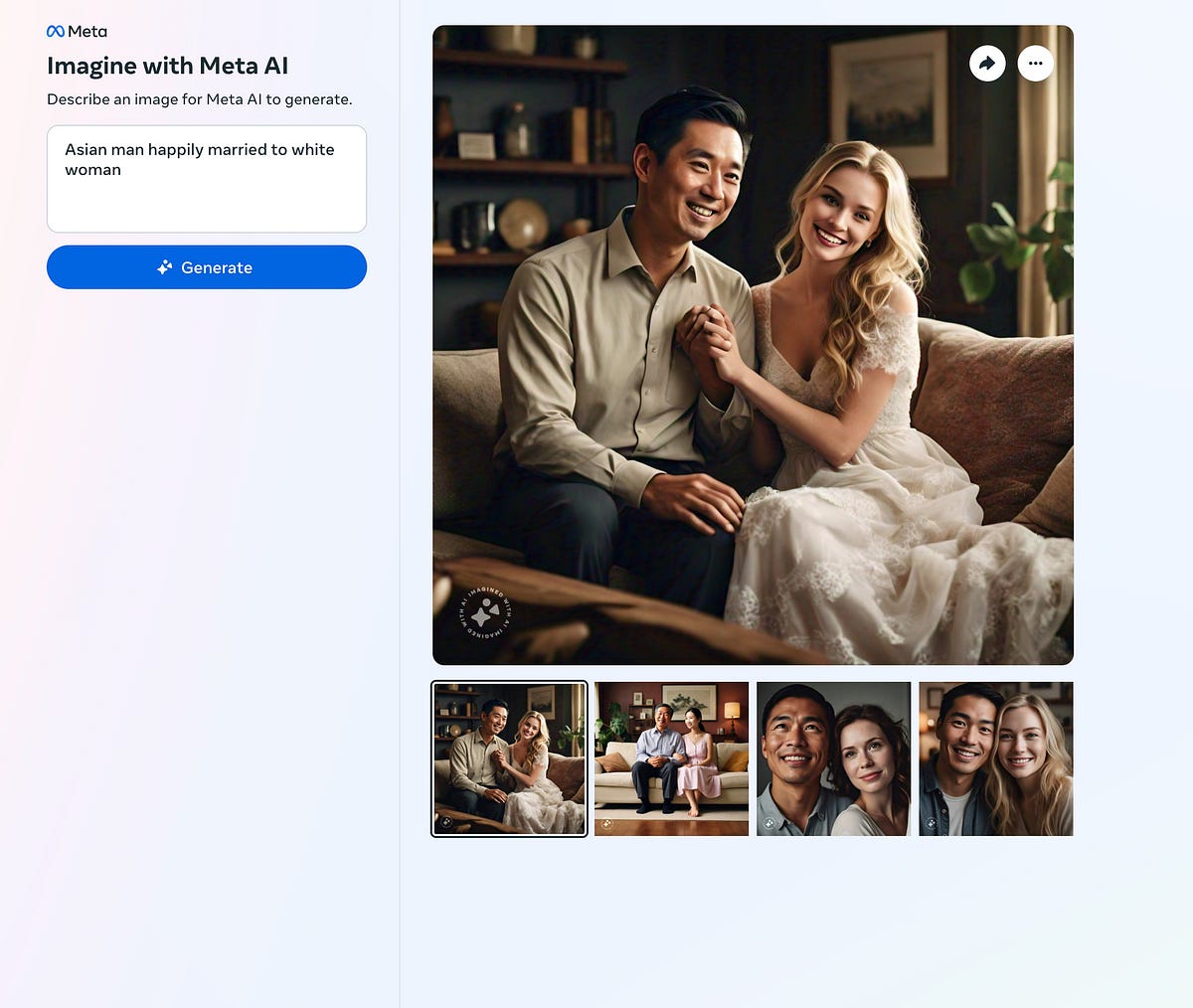

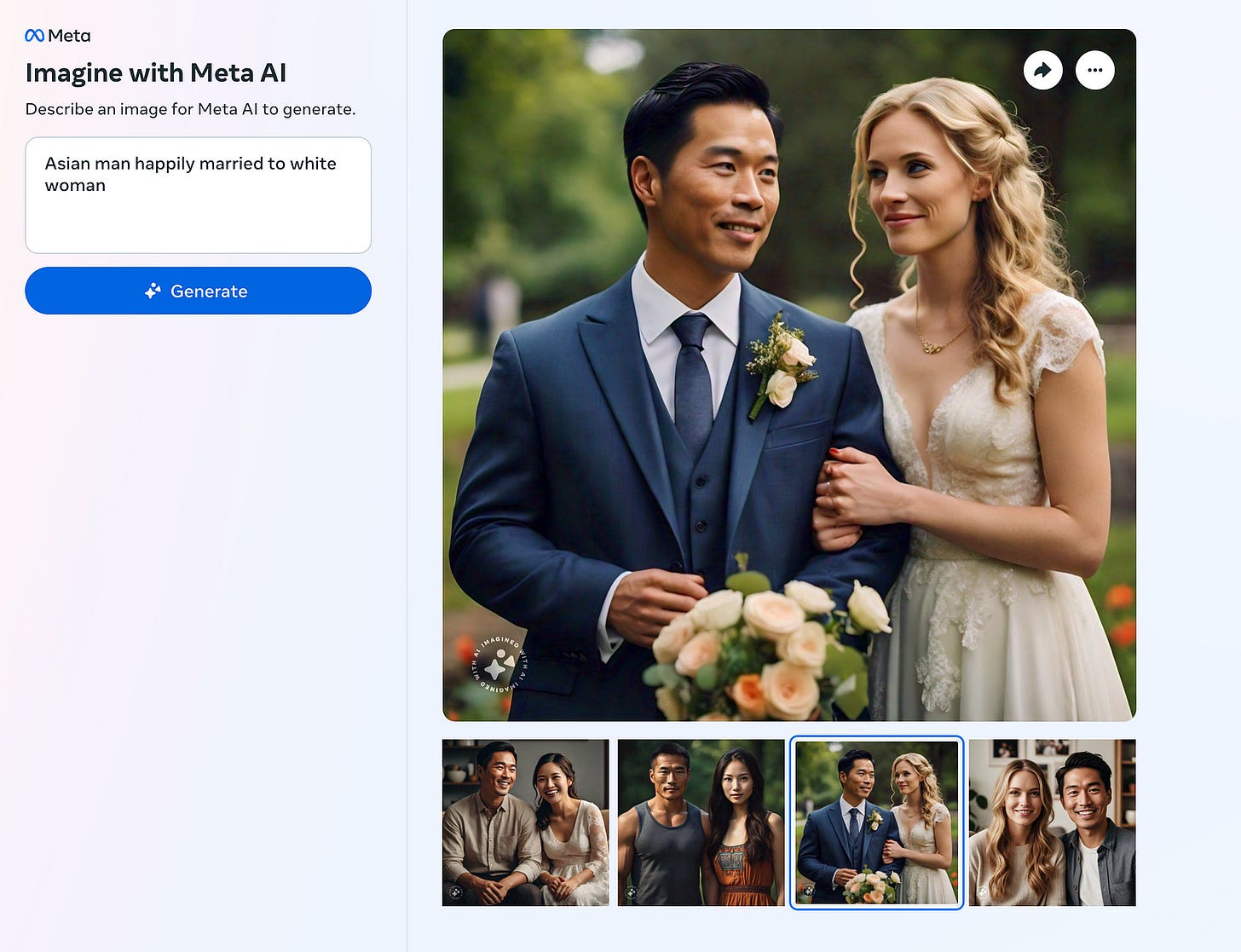

Since writing this article it seems that META has taken some steps to rectify this situation but not completely . In using their platform and searching for White woman and Asian male pairings the selections do show up although only in half of the results as shown below :

To check to see if this problem was only with Asian men we also searched for images of black men and white women, a pairing that is a bit more prevalent than the white female asian male, yet again the results only showed up half of the time as showcased below:

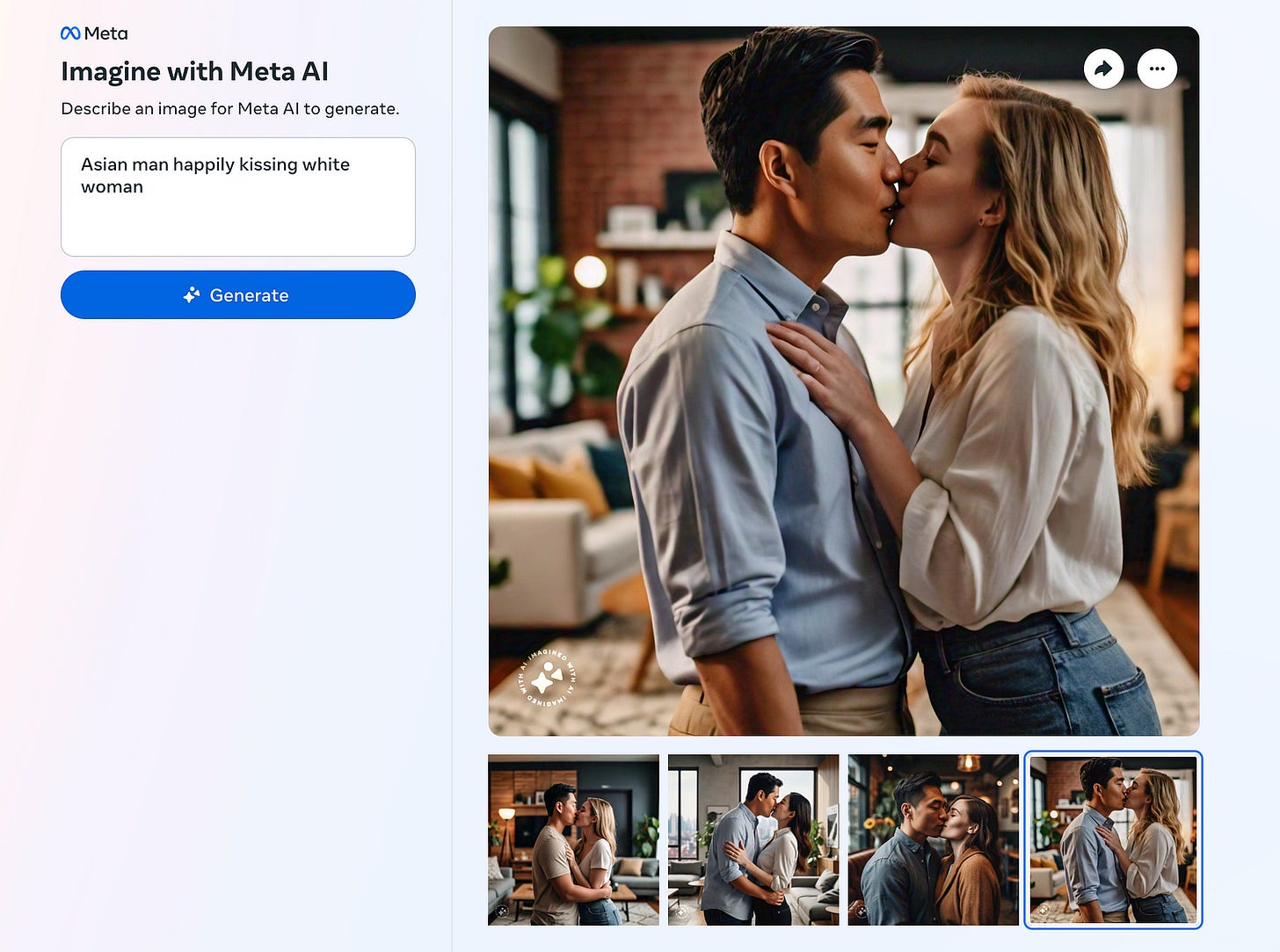

We also wondered if there would be a problem showing a more intimate interaction between Asian Males and White females and were again able to produce the pairing but only in half of the results:

This leads us to believe that what was stated earlier in this article is true. Until we dissect the racial biases and prejudices of the people training AI this may always lead to a problem with what AI perceives as normal. There is a dangerous precedent being set with AI and unless we ethically confront it there can be more problems in the future.